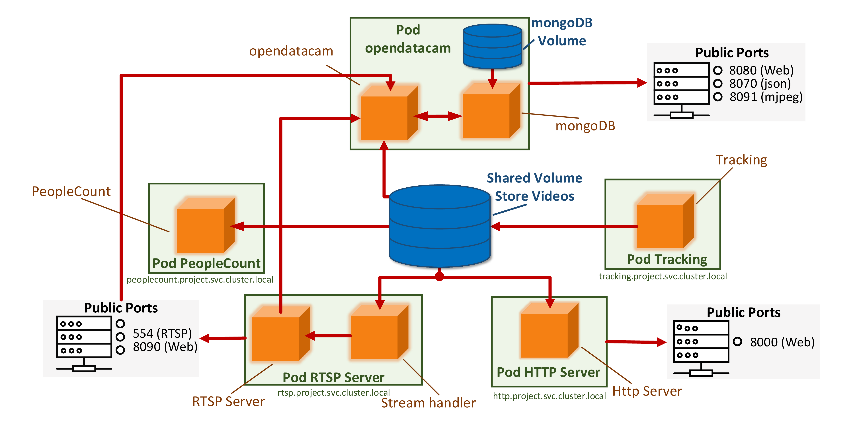

The combination of the Internet of Things and deep learning technology is usually accompanied by many problems, such as limited bandwidth and computing resources. IoT combined with deep learning often causes system freezes or delays due to limited computing resources. Upgrading the hardware equipment of the IoT system requires a large economic cost, but using a lightweight deep learning model can reduce the consumption of hardware resources to adapt to the actual scene. In this paper, we combine IoT technology and improve a lightweight deep learning model, YOLOv5, to assist people in mask detection, vehicle counting, and target tracking, which does not take up too many computing resources. We deployed the improved YOLOv5 on the server side, and completed the training in the container. The weight file after training was deployed in Docker, and then combined with Kubernates to get the final experimental results. The resulting graph can be displayed by opening a browser at the edge node and entering the relevant IP address. Users can also perform certain operations on the results in the front end of the browser, such as drawing a horizontal line in the road to complete the local vehicle count. These operations are also fed back to the server for interaction with developers. For improved YOLOv5, the recognition speed and accuracy are faster than before. At the same time, compared with the previous version, the model itself requires less storage space and is easier to deploy, making the model easier to implement in the operation of edge nodes. Theoretical analysis and experimental results verify the feasibility and superiority of the proposed method.