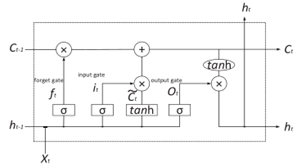

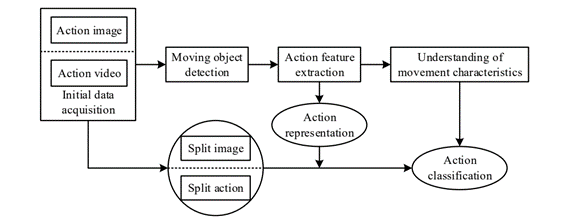

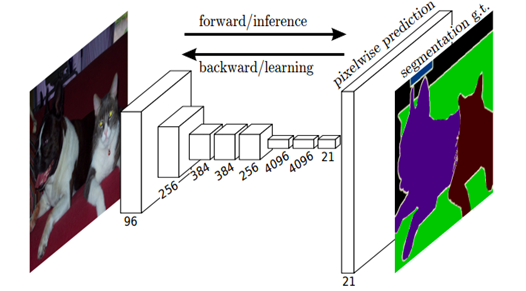

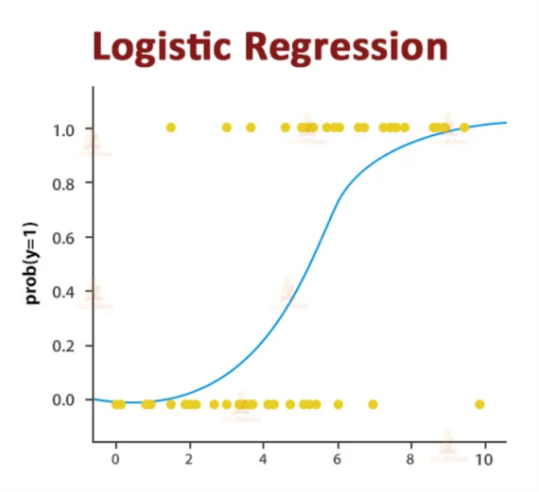

The emergence of cryptocurrencies has revolutionized the concept of digital currencies and attracted significant attention from financial markets. Predicting the price dynamics of cryptocurrencies is crucial but challenging due to their highly volatile and non-linear nature. This study compares the performance of various models in predicting cryptocurrency prices using three datasets: Bitcoin (BTC), Litecoin (LTC), and Ethereum (ETH). The models analyzed include Moving Average (MA), Logistic Regression (LR), Autoregressive Integrated Moving Average (ARIMA), Long Short-Term Memory (LSTM), and Convolutional Neural Network-Long Short-Term Memory (CNN-LSTM). The objective is to uncover underlying patterns in cryptocurrency price movements and identify the most accurate and reliable approach for predicting future prices. Through the analysis, it could be observed that MA, LR, and ARIMA models struggle to capture the actual trend accurately. In contrast, LSTM and CNN-LSTM models demonstrate strong fit to the actual price trend, with CNN-LSTM exhibiting a higher level of granularity in its predictions. Results suggest that deep learning architectures, particularly CNN-LSTM, show promise in capturing the complex dynamics of cryptocurrency prices. These findings contribute to the development of improved methodologies for cryptocurrency price prediction.

Research Article

Open Access