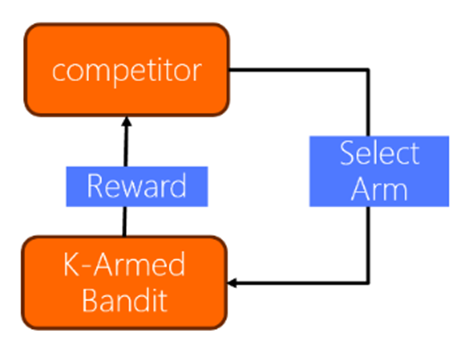

As Internet technology continues to evolve, recommender systems have become an integral part of daily life. However, traditional methods are increasingly falling short of meeting evolving user expectations. Utilizing survey data from the MovieLens dataset, a comparative approach was employed to investigate the efficacy, performance, and applicability of the UCB (Upper Confidence Bound) algorithm in addressing the multi-armed bandit problem. The study reveals that the UCB algorithm significantly impacts the cumulative regret value, indicating its robust performance in the multi-armed bandit setting. Furthermore, LinUCB—an enhanced version of the UCB algorithm—exhibits exceptional overall performance. The algorithm's efficiency is not just limited to the regret value but extends to handling high-dimensional feature spaces and delivering personalized recommendations. Unlike traditional UCB algorithms, LinUCB adapts more fluidly to high-dimensional environments by leveraging a linear model to simulate the reward function associated with each arm. This adaptability makes LinUCB particularly effective for complex, feature-rich recommendation scenarios. The performance of the UCB algorithm is also contingent upon parameter selection, making this an important factor to consider in practical implementations. Overall, both UCB and its modified version, LinUCB, present compelling solutions for the challenges faced by modern recommender systems.