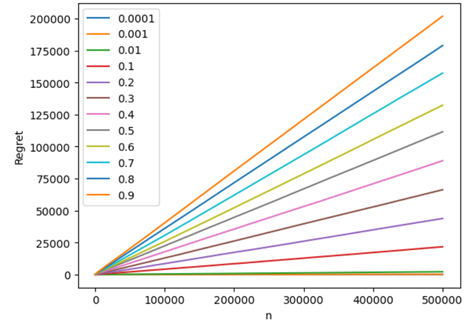

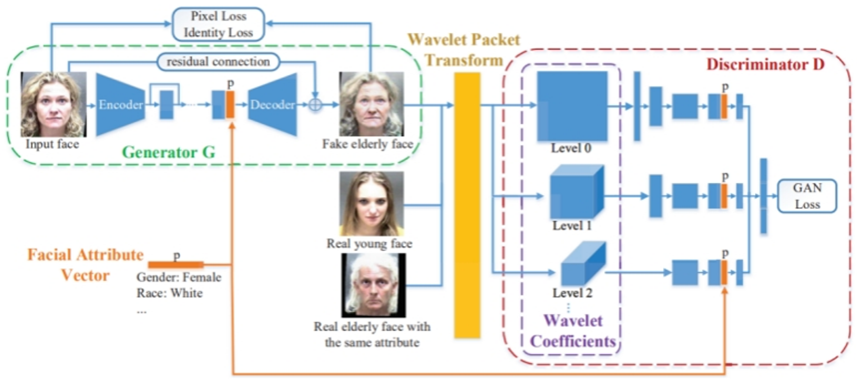

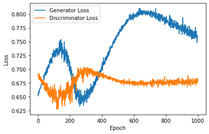

The rapid advancement of technology has led individuals to place an increasing amount of reliance on using Artificial Intelligence (AI) to deal with laborious responsibilities. Generative adversarial networks (GANs) . This study will investigate possible approaches to enhance the performance of GANs models during training. Additionally, a Generator and a Discriminator are formed and coupled together. Their respective learning rates are adjusted to 0.0002, and epochs are set to 400, the batch number set to 128 for the duration of the experiment. In the final step, the simple GANs model is reimplemented by combining all of the components discussed thus far. The primary approach to the experiments revolved around tuning different parameters in the models, changing the original loss function, and then observing the training process of each model. The first is to increase the number of training cycles to 1000 epochs without modifying the model structure to better observe training. Second, this study raised the epochs to 5000, modify the batch number to 512, and assess the model’s performance at three learning rates: 0.0001, 0.0002, and 0.0003. Finally, generator learning rate is set to 0.0007, discriminator to 0.0003, and original model’s Binary cross-entropy loss function is changed to Wasserstein function. After three experiments, conclusions are formed. Changing the initial function to Wasserstein Earth Mover distance and increasing the discriminator and generator learning rates to 0.0003 and 0.0007 improves GANs best. These adjustments cause the GANs model’s two Losses to slowly reduce began to stabilize toward 0 at 2000 epochs.