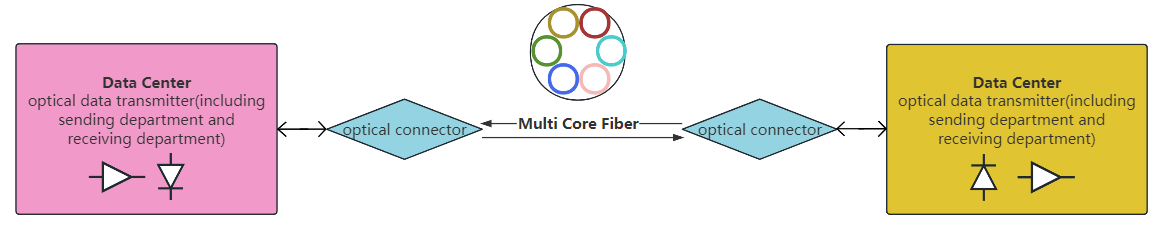

With the rapid advancement of optical fiber communication technology, the speed and distance of information transmission have increased unprecedentedly. Multi-core fibers (MCF), which contain multiple cores, enable high-capacity and high-density information transmission. However, during signal transmission, four-wave mixing (FWM) and intercore crosstalk (ICXT) noise are generated, significantly impacting signal propagation quality. Based on the characteristics of FWM and ICXT noise, two effective noise suppression schemes are proposed. The first scheme involves reducing noise power by increasing the wavelength interval or using non-equally spaced wavelengths to mitigate phase-matching conditions. The second scheme focuses on altering the structure of the MCF; in weakly coupled MCFs, longitudinal random bending, torsion, and structural fluctuations randomly affect the power coupling coefficient. According to the noise power formula, reducing the effective fiber length, increasing the core’s effective area, and using a band with a low nonlinear effect on the MCF can effectively minimize noise generation. Finally, we evaluate the proposed scheme and analyze and discuss the results.