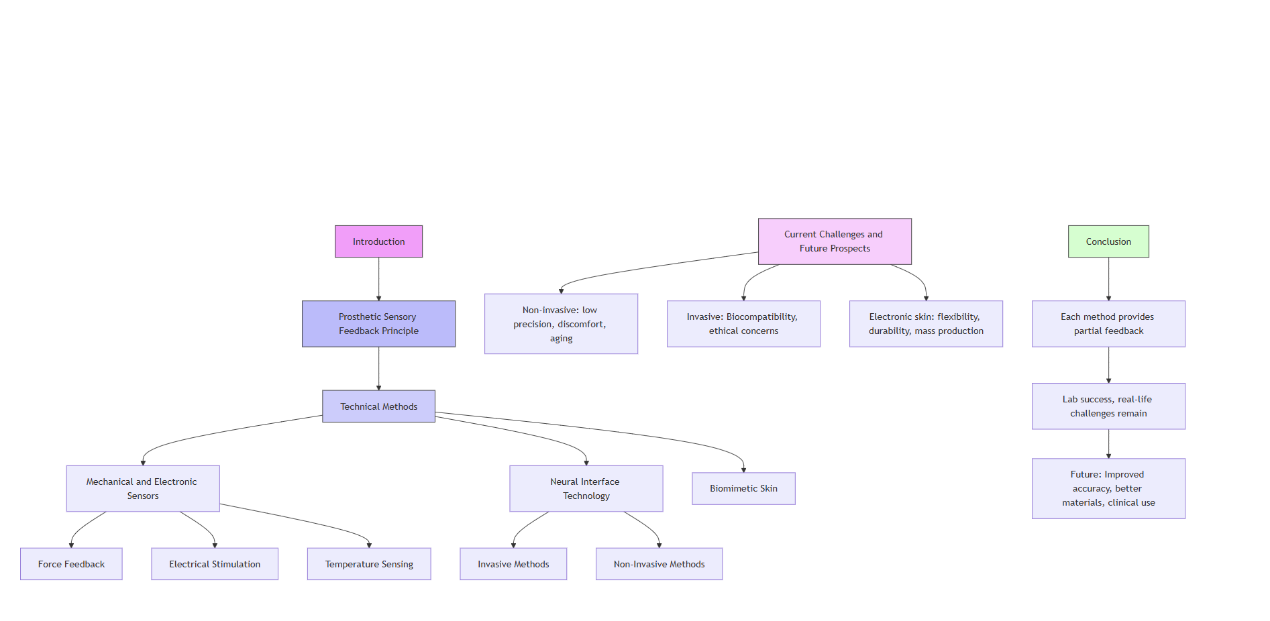

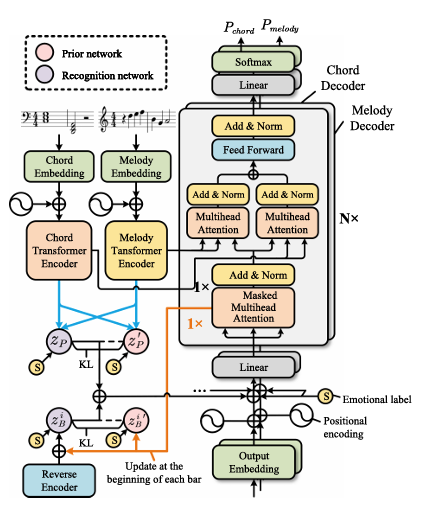

With the rapid evolution of artificial intelligence, emotion-conditioned music generation has become a focal point in computer music research. This study examines how advanced machine learning models, especially those developed in the last five years, enable the generation of music that aligns with specific emotional categories. The study begins by tracing the historical development of computer music and emotional expression in music, followed by an analysis of emotion evaluation methods. It then reviews and compares the performance of three state-of-the-art Transformer-based models: EmoMusicTV, Emotion Token Transformer, and a continuous-valued emotion model. The findings show that models with hierarchical structures and continuous emotion control demonstrate higher flexibility and emotional accuracy. However, challenges remain in data quality, emotional subjectivity, structural coherence, and evaluation consistency. It is concluded by proposing future research directions, such as multimodal conditioning, cross-cultural modeling, and symbolic-audio hybrid systems. This work contributes a comprehensive overview of current technologies and lays the foundation for developing emotionally intelligent music generation systems that bridge AI and human affect.